Charging is most confusing and have had batteries go into decline after 130 charges when promised a minimum 400 charge cycles simply by following the directions that came with the battery. I always fully charged the battery after each ride. Knowing the percentage of charge is but a wild guess. I'm assuming that a very low percentage is better than 100 percent when the battery is not in use. Is partial charging ok if one is not sure how low the remaining charge is or does that use up your charge cycles prematurely?

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Partial Charging

- Thread starter Dave S

- Start date

addertooth

Well-known member

For longer term storage, you want a lower charge level (I hear about 60 percent thrown around for this purpose). Running a battery down to one bar or less also shortens life a bit. I tend to top off when I am about 2 to 3 bars down from the top.

I guess the question I'm asking is does it make sense to charge the battery partially after riding and then charge to full right before the next ride? I need close to a full charge to get the distance I want to go on a single trip. Does it harm or age the battery to charge in 2 sessions instead of one? From what I'm reading, it wouldn't.

addertooth

Well-known member

Lithium Ion batteries age more gently if the voltage is closer to the middle range. Unless I am planning on a long trip the next day, if it is just one or two bars down, it does not get charged. I usually charge at about three bars.

The difference is not large, but it is statistically better to not keep the battery topped off to the highest voltage.

The difference is not large, but it is statistically better to not keep the battery topped off to the highest voltage.

Typically, I ride until I just hit 2 bars. At one bar, the battery turns itself off. Is it best to let the battery sit at 2 bars and then charge to near full right before next use, or charge to 50 percent right after the ride and then complete the charge immediately before the next ride. The period of sitting would be 1 - 3 days.

addertooth

Well-known member

Yes, as most have learned, the whole "bar scale" is pretty vague. On one occasion, the bike hit one bar, but it was 5 miles from home. It was set to a lower setting of PAS 2, and gently ridden the rest of the way home.Typically, I ride until I just hit 2 bars. At one bar, the battery turns itself off. Is it best to let the battery sit at 2 bars and then charge to near full right before next use, or charge to 50 percent right after the ride and then complete the charge immediately before the next ride. The period of sitting would be 1 - 3 days.

In the eBike space - it is confusing - since eBike chargers are built as "dumb chargers" - they will shut off at 100% charge only - you cannot set them to stop at a lower % - lets say 80%.

In general eBike enthusiast's "say" :

1. ONLY charge after a ride AFTER battery cells have had the time to drop to room temp.

2. Normally charge to 80%

3. Charge to 100 % only when you know you will need 100% for your next ride.

4. "Slow charging is good"

5. In winter best to keep your eBike battery inside - c

5. Many owners use a "Timer Outlet" - this style has been oft mentioned: CLICK $15 HERE OUTDOOR TMER OUTLET

In general eBike enthusiast's "say" :

1. ONLY charge after a ride AFTER battery cells have had the time to drop to room temp.

2. Normally charge to 80%

3. Charge to 100 % only when you know you will need 100% for your next ride.

4. "Slow charging is good"

5. In winter best to keep your eBike battery inside - c

5. Many owners use a "Timer Outlet" - this style has been oft mentioned: CLICK $15 HERE OUTDOOR TMER OUTLET

Links to Amazon may include affiliate code. If you click on an Amazon link and make a purchase, this forum may earn a small commission.

pagheca

Well-known member

I agree with you @fabbrisd except - maybe - for one point:

This excellent manual by Bosch indicates that:

I have never understood whether this means "to a maximum of 80%" or "up to 80%". The manual of my battery, a Bosch 625 Wh, says that the battery should be left between 30 and 80%, but I wonder if around 50% would be even better. Probably yes, although to gain an insignificant amount of battery lifetime.2. Normally charge to 80%

This excellent manual by Bosch indicates that:

I guess this apply to at least any Li-Ion battery too, if not to every rechargeable battery.The ideal charge status for lengthy periods of storage is approx. 30 to 60%"

Smaug

Well-known member

Quick answers

The only exception I can think of is a shady company that wants you to wear out your battery sooner so they can sell you another one.

What I did for my last purchase was to buy a bike that had more battery than I need, just so I could operate mostly in that 20-80% range most of the time, but have the option to occasionally fully charge and use the entire charge when needed.

They were bad directions.Charging is most confusing and have had batteries go into decline after 130 charges when promised a minimum 400 charge cycles simply by following the directions that came with the battery. I always fully charged the battery after each ride.

50-60% is ideal when not in use.Knowing the percentage of charge is but a wild guess. I'm assuming that a very low percentage is better than 100 percent when the battery is not in use.

Partial charging is encouraged these days. The guidance for best battery life is keep it between 20-80%. It's OK to charge to 100%, but ideally just before a long ride, so that it will not sit around at 100%. For some reason, lithium batteries don't like that.Is partial charging ok if one is not sure how low the remaining charge is or does that use up your charge cycles prematurely?

The only exception I can think of is a shady company that wants you to wear out your battery sooner so they can sell you another one.

What I did for my last purchase was to buy a bike that had more battery than I need, just so I could operate mostly in that 20-80% range most of the time, but have the option to occasionally fully charge and use the entire charge when needed.

I'm assuming that a very low percentage is better than 100 percent when the battery is not in use. Is partial charging ok if one is not sure how low the remaining charge is ?

Does your controller tell you the battery voltage. Just monitor it while charging and turn the charger off when it displays 80% of full charge voltage (which is roughly equal to the 'theoretical' (for want of the knowing the correct term) capacity of the battery cells). 100% is an 'overcharged' state, hence why it shortens battery life.

Love levo

New member

My specialized levo has a battery meter in percentages, from 0% to 100%. It has a feature that I have turned on to only charge up to 80% and then shut off.

m@Robertson

Well-known member

Yes it makes perfect sense to do that and it won't hurt a thing. The damage done to battery capacity (accelerated wear is a better way to describe it) happens when you charge to full and let it sit like that for a period of time. Charging to 100% and going straight out and riding it completely minimizes the deleterious effects of a 100% charge. NEVER let a pack sit at 100% is a good rule.I guess the question I'm asking is does it make sense to charge the battery partially after riding and then charge to full right before the next ride?

<snip>

Does it harm or age the battery to charge in 2 sessions instead of one? From what I'm reading, it wouldn't.

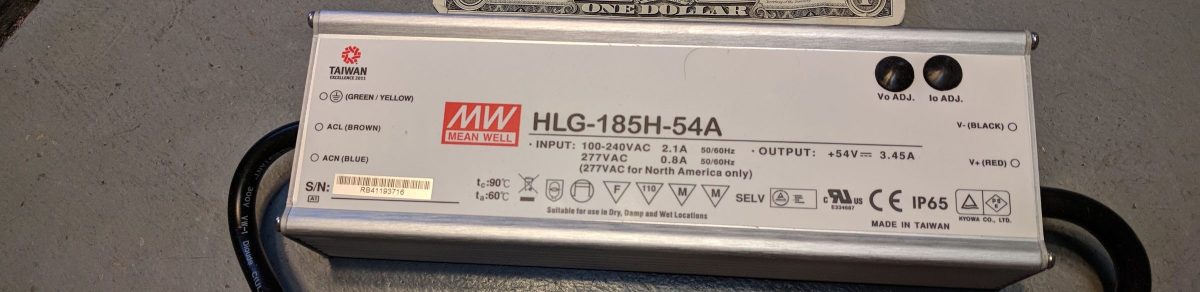

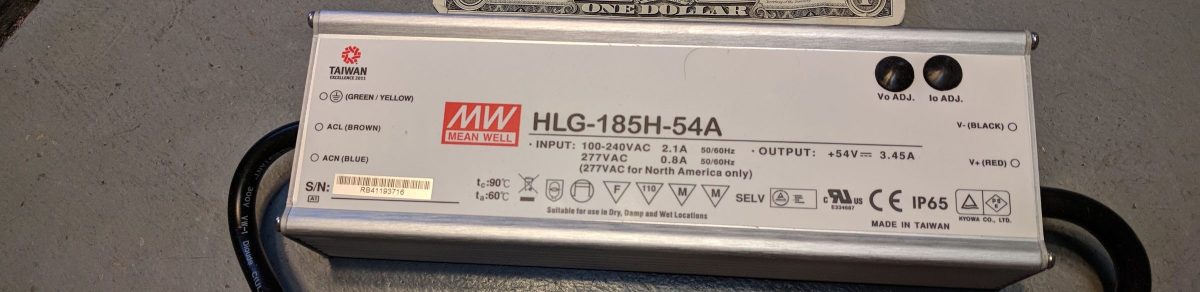

That is only true of chargers that are not adjustable. Some are. Additionally, a li-ion 'charger' that uses 'smart charging' is nothing more than a power supply that operates with 'CC+CV mode'. Which means you can take an ordinary lab power supply that has dials for current level and voltage and poof you have yourself a li-ion charger with near-infinite adjustability and a digital display.In the eBike space - it is confusing - since eBike chargers are built as "dumb chargers" - they will shut off at 100% charge only - you cannot set them to stop at a lower % - lets say 80%.

But not infinite lifespan or absolute reliability. To get that you need to either buy a Grin Satiator for $300+ (which is an LED power supply with a display screen and some onboard memory) or use a commercial led power supply meant for municipal streetlights and commercial signage that has an MTBF measured in hundreds of thousands of hours.

An Ultra Reliable Ebike Battery Charger…

How about a charger – quickly adjustable for voltage and current – that is rated for hundreds of thousands of hours of use before it typically fails?

talesontwowheels.com

talesontwowheels.com

What you really need to see is the pack is no longer increasing in temperature. Once it starts dropping (use a cheap thermometer probe taped to the casing) you can start charging. This is only meaningful if you are riding in 100-degree weather or worse. Otherwise fuggedaboudit.1. ONLY charge after a ride AFTER battery cells have had the time to drop to room temp.

OR once per month on a regular ride as a 'balance charge' to ensure the cell groups are all running at equal levels. I am giving the extremely short version here and skipping the details.3. Charge to 100 % only when you know you will need 100% for your next ride.

VERY good. I like to use 1 amp but I have gone as low as 0.20 amps which is about 10 watts fed into the battery. Do-able on those lab power supplies. The commercial LED drivers only go down to about 0.90 amps.4. "Slow charging is good"

Its not a BAD idea, but there is nothing wrong with letting a li-ion battery sit in below-freezing temperatures. What is bad is charging it down near freezing. Using the battery in freezing weather, it just drains faster than normal. Charging it on the other hand in freezing temps permanently damages it.5. In winter best to keep your eBike battery inside - c

That is actually the wrong kind of timer. What you linked is a 24-hour timer that works on a regular schedule. It will turn an appliance on and off at specific times of the day and night. What you want is a 'cutoff timer'... a kitchen timer you set for 30 minutes or an hour or two so it starts when you tell it to and shuts off after awhile.5. Many owners use a "Timer Outlet" - this style has been oft mentioned: CLICK $15 HERE OUTDOOR TMER OUTLET

I did a writeup on how to manage this whole deal, including a $9.99 timer and how to figure out how long to set your timer for on any given day, here.

Ebike Battery Charge Safety: Use A Cutoff Timer

Lets easily add a layer of extra protection to help safeguard your home and loved ones from a battery fire.

talesontwowheels.com

talesontwowheels.com

Over the years I have seen smart people open them up and announce that they are pretty flimsy inside, so as a result I made timers using components that are residential-electrical-grade. A few bucks more to do it this way but nowhere near the deductible on your fire insurance for your home:

Ebike Battery Charge Safety: Heavy Duty Cutoff Timer

Add a safety layer to ebike charging practices, with residential/commercial grade components rather than the cheap stuff.

talesontwowheels.com

talesontwowheels.com

Links to Amazon may include affiliate code. If you click on an Amazon link and make a purchase, this forum may earn a small commission.

Smaug

Well-known member

Just a couple of clarifications to m@'s post.

The 2 A that my chargers put out is even at trickle charge at 667 mA/cell.

As you alluded to, it's mostly about heat. One could argue that it's better to let the pack cool to room temp, then charge @ 4 A than to charge a warm pack at 2 A.

The cell manufacturers recommend letting it cool from the high current discharge before charging, so temperature is an issue, but I'm curious why you believe it has more to do with dropping in temperature, rather than at room temperature or something.But not infinite lifespan or absolute reliability. To get that you need to either buy a Grin Satiator for $300+ (which is an LED power supply with a display screen and some onboard memory) or use a commercial led power supply meant for municipal streetlights and commercial signage that has an MTBF measured in hundreds of thousands of hours.

An Ultra Reliable Ebike Battery Charger…

How about a charger – quickly adjustable for voltage and current – that is rated for hundreds of thousands of hours of use before it typically fails?talesontwowheels.com

What you really need to see is the pack is no longer increasing in temperature. Once it starts dropping (use a cheap thermometer probe taped to the casing) you can start charging. This is only meaningful if you are riding in 100-degree weather or worse. Otherwise fuggedaboudit.

How would this balance charge the pack? Any cell balancing (if present) would be handled by the BMS internal to the pack, since the charging & discharging only use the ends of the series of cells.OR once per month on a regular ride as a 'balance charge' to ensure the cell groups are all running at equal levels. I am giving the extremely short version here and skipping the details.

1 A is really slow and ultra-conservative. 0.2 A is ridiculously so for these 18650 cells with at least 4 in each parallel cluster. That would equate to a 0.033 A (33 mA) charge current per cell. There's no benefit in charging that slow. Even charging at 1 A would yield a 167 mA charge current; that also is a trickle charge.VERY good. I like to use 1 amp but I have gone as low as 0.20 amps which is about 10 watts fed into the battery. Do-able on those lab power supplies. The commercial LED drivers only go down to about 0.90 amps.

The 2 A that my chargers put out is even at trickle charge at 667 mA/cell.

As you alluded to, it's mostly about heat. One could argue that it's better to let the pack cool to room temp, then charge @ 4 A than to charge a warm pack at 2 A.

m@Robertson

Well-known member

Mostly because I'm paying much closer attention to the situation at a deeper level, going down to pack construction and which cells I use. I'm typically after a pack build that simply doesn't increase in temperature from high current discharge. For example, you can use Samsung 25R cells and flog the bejesus out of them and that cell is known not to heat up. Its only drawback is its not particularly energy dense so you need more cells to make a high capacity pack... but if you make a 14S10P 25R pack there's pretty much nothing you can do to heat it up because its so big. So yeah sure if I had a 14S3P pack of Samsung 30Q's, which are meant to run hot and they really, really do, then you are going to have a whole different set of rules to play by.The cell manufacturers recommend letting it cool from the high current discharge before charging, so temperature is an issue, but I'm curious why you believe it has more to do with dropping in temperature, rather than at room temperature or something.

Also, I charge typically at 1 amp or less. So my charging level is going to be so low I will not be heating up the pack from charging. Take that into account and the cells chosen, and on a 100-degree day I know from my onboard temp sensor my cells are nowhere near the thermal runaway temp in the neighborhood of 140 degrees... if the pack is decreasing in temperature then its going to keep decreasing. 1a of current being fed into it isn't going to move the needle on heat (volts x amps = watts so I am feeding in only about 50 watts into a 25-30ah pack... peanuts).

And if the pack is running roughly at ambient temp +5 degrees fahrenheit at most when its 100+ out... that means on a 60-degree day my pack is at 60-62 (not a guess since I have that temp sensor). Thats not a temperature to be in any way concerned about..

The BMS only balances the pack when the charge level reaches the ballpark of 97 or 98%. So if you are charging to 80% regularly, your BMS is not balancing. There are some very rare bluetooth BMS' that allow balancing at lower levels but they may as well be unicorns. So if you are doing 80% charges, and doing them daily, a once-a-month balance charge at 100% is a decent preventative. Note a balance charge means you have to let the pack sit for a few hours at 100% while connected. I usually use my Satiator for them as you can see it flick on and off at decreasing frequency as the cell groups balance.How would this balance charge the pack? Any cell balancing (if present) would be handled by the BMS internal to the pack, since the charging & discharging only use the ends of the series of cells.

No. Less current is less heat into the pack. And low current is less risk in general for anything unforeseen. Yes I am decreasing a small risk to a really small risk. 0.2a at say 55v equates to putting 11w of current thru the wire. If I have a pre-determined route, and I know I am going to set the bike in a garage for 9 hours lets say (all of the work day), and if its charging that full 9 hours and when I get back down to it it'll have about 54.5v, which is plenty to get me home, AND charging at such a measured rate eliminates any concern about overcharging since such a low rate makes it impossible for that to happen, where is the problem? I'm taking advantage of my schedule and doing only what is necessary to do the job I need.1 A is really slow and ultra-conservative. 0.2 A is ridiculously so for these 18650 cells with at least 4 in each parallel cluster. That would equate to a 0.033 A (33 mA) charge current per cell. There's no benefit in charging that slow. Even charging at 1 A would yield a 167 mA charge current; that also is a trickle charge.

That pic of the power supply I posted above was in my office garage, and it was feeding in 0.30a as you can see. If I had to make a midday run to the bank, I'd kick it up to 0.50a or maybe even a whole 1.0a. There's no need for more as, again, I know my route and my power needs and these rates meet them.

What do you have that is concrete to back this specific process up? I think this kind of approach only has merit if you are not fully aware of your real time pack temps, pack construction and cell type in the first place, so you fudge your process in both directions (cooling and heating) in the hopes of creating something that doesn't hurt too bad.As you alluded to, it's mostly about heat. One could argue that it's better to let the pack cool to room temp, then charge @ 4 A than to charge a warm pack at 2 A.

I mean... how hot do you think a battery pack gets? If its so hot you genuinely need to cool it off, then in my mind that means there was a failure (or a cost compromise) much earlier on in the build process.

addertooth

Well-known member

Here is a link to a decent article written at the Technician level on Lithium-Ion batteries.

Summary:

The 4.2 volts per cell voltage, and Watt-Hour capacity of Lithium-Ion cells is temperature dependent.

Internal discharge rate is temperature dependent. (loss of power over time is temperature dependent).

i.e. Many chargers "assume" your battery needs about 4.2 volts per cell to be fully charged, but if the battery is exceptionally cold, 4.2 volts is actually too much. If your battery is exceptionally hot, it represents less than a full charge at 4.2 volts.

Most calculations used for ideal charge "assumes" roughly 25 degrees C ambient temperature. A few degrees won't matter. 20 degrees C hotter or colder does.

Separate eBike battery issues:

Some battery packs have thermistors or thermocouples to report cell temperature to the BMS, and the BMS can adjust the peak voltage curve some. But not all packs have this feature. The only way to know for sure would be cutting into the pack. Many packs which "brag" about thermal overload protection, are simply that; they don't have the circuitry (or the thermal protection is ONLY for the FETs in the BMS). The most recent battery pack I cut into claimed to have this feature, but it didn't in reality. But, if it had been present, a smart BMS can use the temperature reading to tweak the charge curve.

Colorful narrative:

When I was in Iraq, my unit was complaining their Lithium batteries were not giving the expected run-time on the battlefield equipment. I asked for them to show me the charging station. It was resting on the bench, in the blazing Sun, and had a surface temperature of over 150 Degrees F.

Ambient temperature in the shade hit 128 degrees F. on some days.

I told them to move the charger back inside their air-conditioned shipping container (which was their workshop). Once the batteries were charged at a temperature of about 25 degrees C., battery life suddenly started lasting within the published specifications for the equipment.

The article is easy reading, and not terribly technical, but creates a great foundation to discuss batteries, usable capacity, and charging.

Richtek technical article Lithium Ion

Summary:

The 4.2 volts per cell voltage, and Watt-Hour capacity of Lithium-Ion cells is temperature dependent.

Internal discharge rate is temperature dependent. (loss of power over time is temperature dependent).

i.e. Many chargers "assume" your battery needs about 4.2 volts per cell to be fully charged, but if the battery is exceptionally cold, 4.2 volts is actually too much. If your battery is exceptionally hot, it represents less than a full charge at 4.2 volts.

Most calculations used for ideal charge "assumes" roughly 25 degrees C ambient temperature. A few degrees won't matter. 20 degrees C hotter or colder does.

Separate eBike battery issues:

Some battery packs have thermistors or thermocouples to report cell temperature to the BMS, and the BMS can adjust the peak voltage curve some. But not all packs have this feature. The only way to know for sure would be cutting into the pack. Many packs which "brag" about thermal overload protection, are simply that; they don't have the circuitry (or the thermal protection is ONLY for the FETs in the BMS). The most recent battery pack I cut into claimed to have this feature, but it didn't in reality. But, if it had been present, a smart BMS can use the temperature reading to tweak the charge curve.

Colorful narrative:

When I was in Iraq, my unit was complaining their Lithium batteries were not giving the expected run-time on the battlefield equipment. I asked for them to show me the charging station. It was resting on the bench, in the blazing Sun, and had a surface temperature of over 150 Degrees F.

Ambient temperature in the shade hit 128 degrees F. on some days.

I told them to move the charger back inside their air-conditioned shipping container (which was their workshop). Once the batteries were charged at a temperature of about 25 degrees C., battery life suddenly started lasting within the published specifications for the equipment.

The article is easy reading, and not terribly technical, but creates a great foundation to discuss batteries, usable capacity, and charging.

Richtek technical article Lithium Ion

pagheca

Well-known member

excellent summary. I agree on everything you (and @Smaug) wrote. The only problem is how much this impacts the duration of the battery pack. Sometimes in the past, I had to cancel a ride for a week after charging to 100%. I wonder if this means one less charge in the lifespan of the battery pack or 10... Any data available?

I would really like to do my additional battery pack and hope in some assistance. Although I am not a qualified electronic engineer I have quite a good knowledge and designed a couple of circuit boards years ago to control instrumentation. I just have to find time and better understand what is required.

I would really like to do my additional battery pack and hope in some assistance. Although I am not a qualified electronic engineer I have quite a good knowledge and designed a couple of circuit boards years ago to control instrumentation. I just have to find time and better understand what is required.

No matter how well you treat your lithium battery, you're only going to get 3 years of like new operation out of it. In the 4th year it will start going downhill, and in the 5th year internal resistance will be high enough that sag, and reduced capacity will become a major issue, and nothing you do will have much effect on changing this.